A reflective essay in support of the next digital workforce, cultural renewal and social good

| Core thesis: every technological revolution creates new wealth, new institutions and new social stresses. The leaders of the Agentic AI age can become bastions of humanity if they convert capability into public good: education, culture, access, safety, civic trust and opportunity for the younger workforce. |

Introduction: every revolution creates a moral question

Every major socio-economic revolution begins with machinery, capital and ambition; but it is judged, finally, by whether it enlarges the human condition. The Industrial Revolution gave Britain and then the world the factory, the railway, the steel mill, the telegraph, the chemical works and the mass-produced book. It also gave society urban overcrowding, dangerous work, child labour, social dislocation and a painful rebalancing between capital and labour. The digital and Agentic AI revolution is different in its tools but similar in its moral challenge. It places reasoning systems, autonomous agents, synthetic content, robotics and data-driven decision-making at the centre of the economy. It promises productivity, discovery and new forms of abundance, but it also threatens to fracture opportunity unless we build a social architecture around it.

The question, therefore, is not simply whether AI will be powerful. It clearly will be. The question is whether the builders, owners and governors of this new capability can become stewards of humanity as well as stewards of enterprise value. History suggests that revolutionary wealth can be converted into public good when it is channelled into institutions: libraries, galleries, universities, museums, model villages, hospitals, scholarships and cultural foundations. The task now is to translate that older covenant into the digital philanthropy age: not as public relations, not as indulgence, but as a structural commitment to arts, culture, education, young people, ethical skills and human agency.

The Industrial Revolution and the rise of institutional philanthropy

The first Industrial Revolution did not arrive as a neat story of progress. It was a complex equation of invention, capital, migration, hardship, enterprise and social reform. New industrial fortunes were created at remarkable speed, often in places where the civic infrastructure had not yet caught up with the scale of change. In the textile towns, the ports, the coalfields and the steel cities, private wealth and public need stood side by side. The railway could shrink distance; the factory could increase output; but neither could automatically create dignity, literacy, culture or shared prosperity.

This is where the great industrial philanthropists matter. Andrew Carnegie’s story is perhaps the clearest example. Having made immense wealth in steel, Carnegie argued in The Gospel of Wealth that the central problem of the age was the administration of wealth in a way that preserved social harmony. His most famous institutional expression of that principle was the library. Between 1886 and 1919, Carnegie’s donations funded 1,679 new public library buildings in the United States alone, according to the U.S. National Park Service. Those buildings were not merely book repositories. They were civic engines of self-education. They helped working people, immigrants and young people gain access to knowledge that had previously been the preserve of the privileged.

The pattern repeated in different forms in Britain. Sir Henry Tate converted part of a sugar fortune into a national cultural legacy by gifting his collection of contemporary paintings to the nation, forming the nucleus of what became Tate Britain. William Hesketh Lever, whose wealth came from soap and consumer products, is remembered not only for industrial success but also for Port Sunlight, the Lady Lever Art Gallery and the Leverhulme Trust’s continuing support for research and education. George Cadbury and the Cadbury family built Bournville around the idea that industrial employment should be connected to housing, education, green space and a better quality of life for workers and their families. Henry Wellcome’s pharmaceutical fortune was ultimately converted into one of the world’s most significant health-research endowments, with the Wellcome Trust established after his death in 1936 to improve health through research.

These examples were not perfect. Industrial philanthropy must be seen with honesty, including the power imbalance between employer and worker, the paternalistic assumptions of the era, and the fact that charitable giving could not, by itself, correct all structural inequalities. Yet the lasting lesson is profound. When industrial wealth funded durable public institutions, it extended the benefits of a revolution beyond the immediate owners of capital. It gave society ladders: ladders of literacy, culture, health, education and aspiration.

Arts and culture as democratic infrastructure

One of the most important lessons from the industrial age is that philanthropy at its best did not treat arts and culture as decorative extras. It treated them as public infrastructure. A gallery, a library, a theatre, a museum, a park or a music hall gave working people a form of participation in civilisation that was not defined solely by labour. Culture became a counterweight to the machine. It reminded society that a person is not only a unit of productivity; a person is a citizen, a creator, a reader, a performer, a parent, a dreamer and a contributor.

This is directly relevant to Agentic AI. The risk of the AI age is that productivity becomes the only measurement. If all that is counted is process speed, automation rate, cost reduction and margin improvement, then the revolution will be economically impressive but socially thin. The arts and culture must therefore sit at the centre of digital philanthropy. AI can help preserve endangered languages, open archives, support community theatre, widen access to music and visual arts, make museums more interactive, and allow young creators to produce work that once required expensive equipment or privileged networks. But it must be done in a way that respects copyright, provenance, attribution and human creativity.

The next digital patronage should not merely fund elite cultural institutions. It should fund creative access at community level: youth studios, AI-enabled local archives, digital apprenticeships in theatre and media, public-interest datasets for culture, regional creative labs, and new forms of collaboration between artists and technologists. The industrialists who funded libraries understood that access to knowledge was a leveller. The AI giants must understand that access to creative tools can be a leveller too, provided that the human artist remains visible, respected and fairly rewarded.

Young people and the next workforce covenant

The most urgent social question of the Agentic AI age is the future of the younger workforce. Industrial Britain often absorbed young people into physical work before it had built a proper architecture of education and protection. Historians have shown the severe reality of child labour in the late eighteenth and early nineteenth centuries. We should not make an equivalent mistake in the digital age by allowing young people to become casual passengers in systems they do not understand, cannot govern and cannot economically influence.

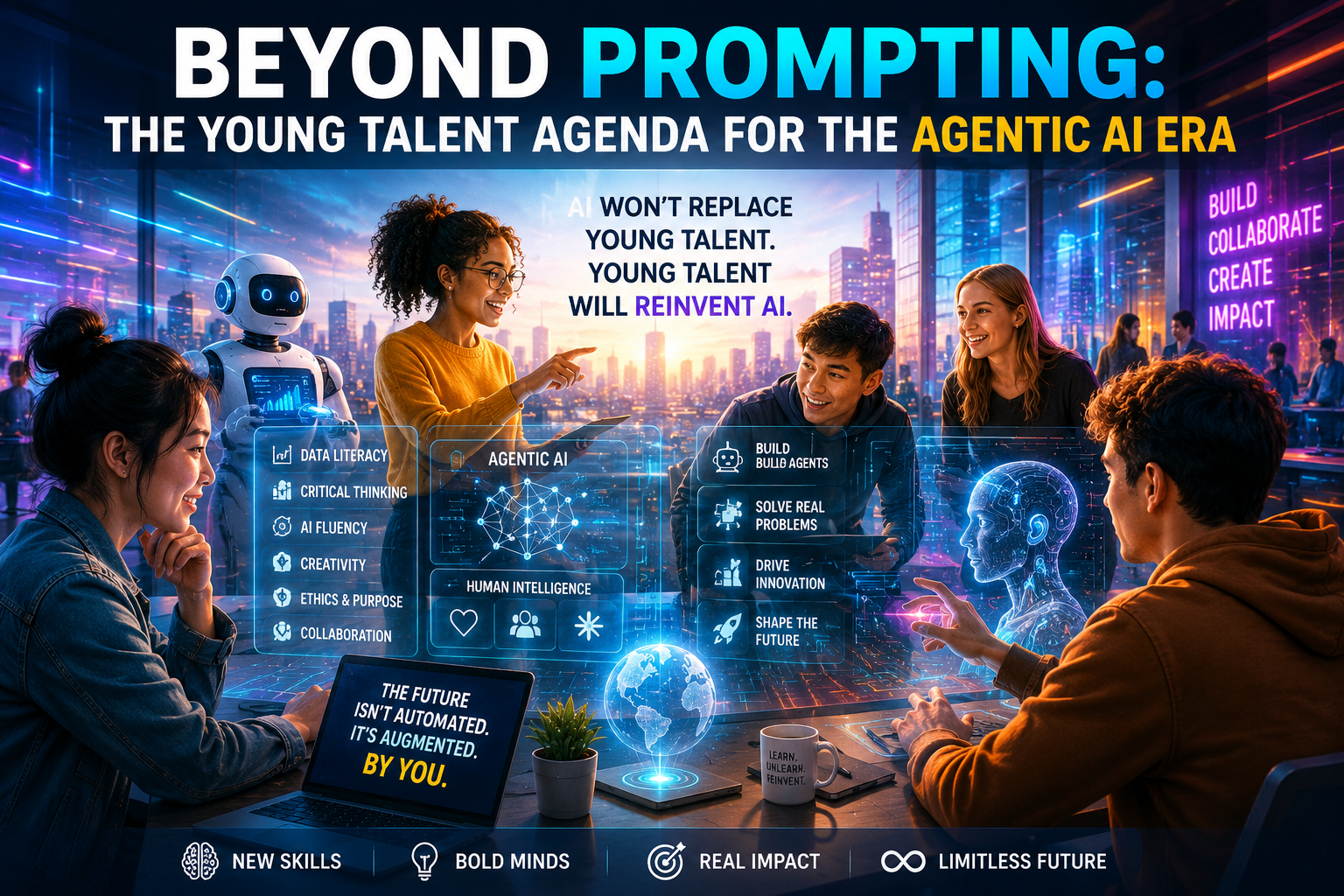

The next workforce covenant must begin with capability. Young people need AI literacy, but not in the superficial sense of knowing how to write prompts. They need to understand data, ethics, verification, model limitations, cyber risk, intellectual property, human-centred design, critical thinking, collaboration and the difference between automation and judgement. They need to learn how to work with agents, supervise agents, challenge agents and build agentic workflows that are safe, explainable and productive. They also need the confidence to ask moral questions: Who benefits? Who is excluded? What is being measured? What is being hidden? What happens when the system is wrong?

This is why philanthropy in the AI age should not only fund scholarships after the fact. It should create living bridges between education and enterprise. It should support apprenticeships, fellowships, local AI academies, civic innovation studios, arts-and-technology residencies, teacher training, open courseware and safe sandboxes where young people can practise with real tools on real problems. In previous blogs I have argued that the next digital workforce will not be created by software alone. It will be created by the deliberate combination of enterprise strategy, upskilling, governance, cultural confidence and human imagination. Agentic AI makes that argument more urgent, not less.

The AI giants and the digital philanthropy age

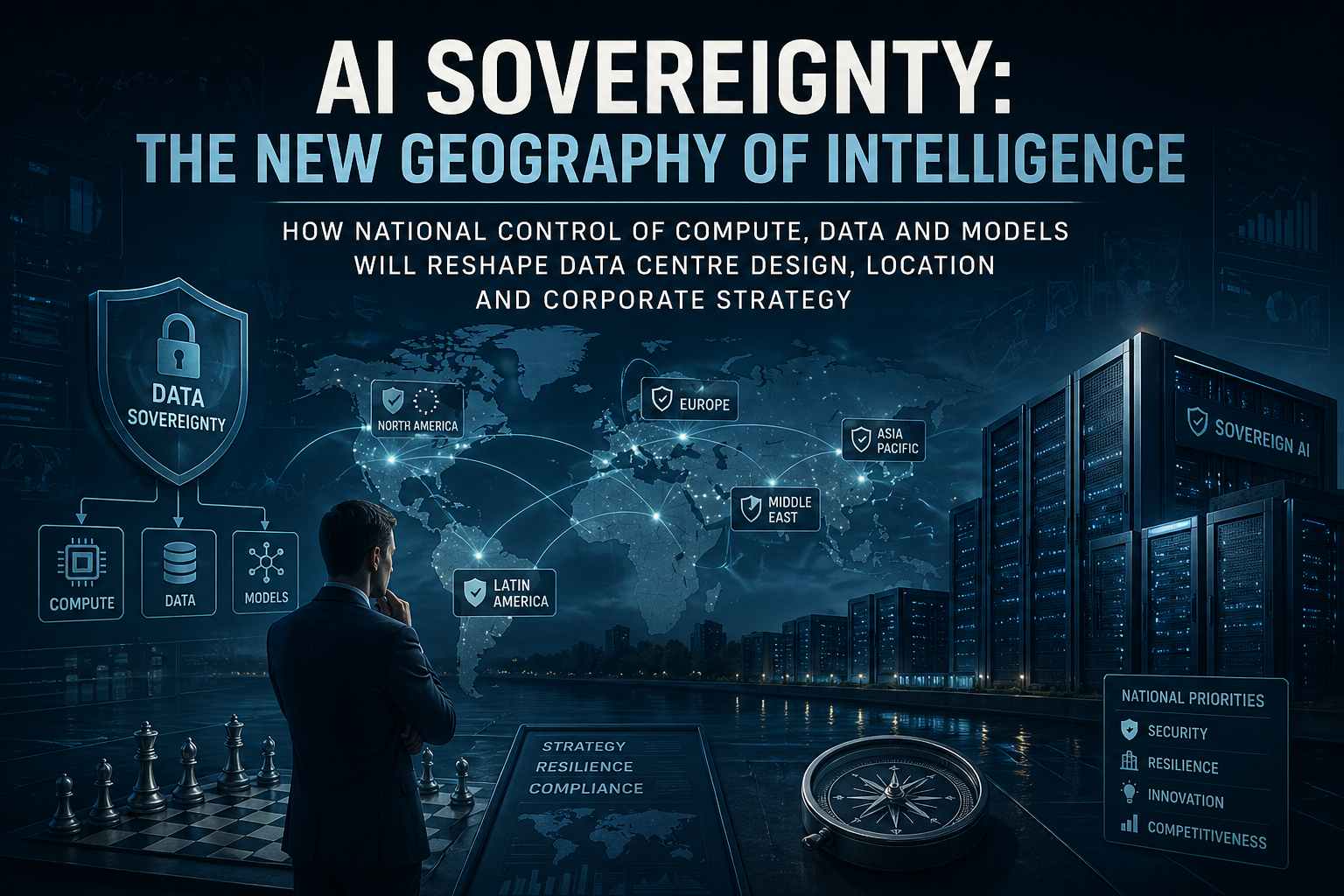

The current AI revolution is being shaped by a small number of extraordinary organisations: model builders, cloud providers, semiconductor companies, frontier AI laboratories, platform companies and the foundations connected to them. They have the ability to influence education, labour markets, culture, research, healthcare, security and public administration at a scale that earlier industrialists could barely imagine. That power brings a direct responsibility.

Encouragingly, there are signs that this responsibility is being recognised. In May 2026, Reuters reported that the OpenAI Foundation committed $250 million to help workers and economies navigate AI disruption, including research on labour-market impact and support for communities affected by automation. The same month, Anthropic and the Gates Foundation announced a $200 million partnership to support AI-related public goods in areas including health and education. Google has also announced a $1 billion initiative to support AI training and tools for U.S. higher education institutions and nonprofits. Microsoft has made large commitments to AI and cloud education, including programmes aimed at equipping millions of people with AI skills. These are significant signals, not because they solve the problem, but because they indicate the shape of a possible new covenant.

However, digital philanthropy must go beyond donations, credits and announcements. The lesson from Carnegie is not simply that he gave money; it is that he helped create institutions which survived him. The lesson from Wellcome is not simply that wealth was endowed; it is that an independent mission was built around research for human health. The lesson from Bournville and Port Sunlight is that the social setting of work matters. The lesson from Tate is that cultural access can be a national asset.

The AI giants can therefore become bastions of humanity if they adopt five practical commitments. First, they should build permanent public-interest institutions, not only short-term grant programmes. Secondly, they should support independent evaluation of AI’s social and labour-market effects, including uncomfortable findings. Thirdly, they should fund the cultural and creative commons with respect for artists, writers, performers and local communities. Fourthly, they should place young people at the centre of AI transition, especially those outside elite educational pathways. Fifthly, they should treat AI governance, safety, transparency and inclusion as philanthropic duties as well as regulatory obligations.

Agentic AI for social good

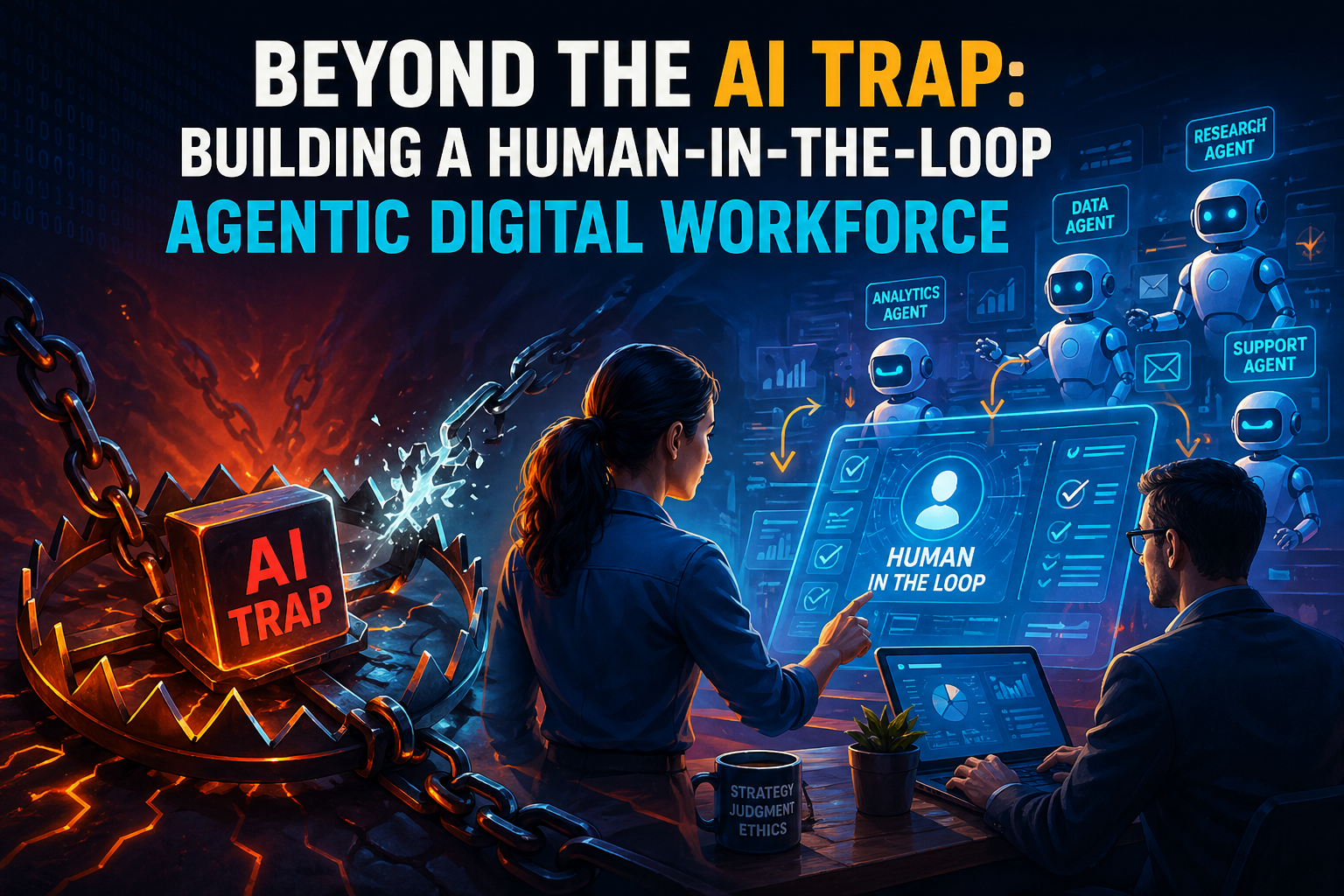

Agentic AI is particularly important because it moves AI from a passive tool to an active collaborator. Properly designed, AI agents can help a charity write funding bids, help a community group map local needs, help a small theatre produce accessible materials, help a young apprentice learn a technical skill, help a local authority identify road defects, help a doctor triage information, help a teacher personalise support, or help a social enterprise manage complex workflows. The social good potential is not abstract. It is operational.

But agentic systems also carry risk. If they are poorly governed, they can make decisions too quickly, reproduce bias, create plausible falsehoods, obscure accountability or displace human judgement. The answer is not to stop progress; the answer is to civilise progress. That means building AI Canvas methods, readiness assessments, governance councils, audit trails, human-in-the-loop controls, ethical procurement models and clear responsibility structures. In the same way that industrial society eventually developed safety standards, labour protections and public education, the AI age must develop the civic protocols of intelligent automation.

The opportunity is to use Agentic AI as an amplifier of social imagination. It can help philanthropists identify gaps, measure outcomes, connect donors with projects, reduce administrative waste, and support smaller organisations that lack professional grant-writing capacity. It can also democratise expertise. A young person in Liverpool, Birmingham, Nairobi or Kuala Lumpur should be able to access tools that help them learn, create, test, build and contribute. That is the real promise: not an AI revolution that merely concentrates capability, but one that distributes agency.

A new model: from charitable giving to capability giving

The digital philanthropy age should move from the idea of charitable giving to the deeper idea of capability giving. Money matters, but capability is more durable. Capability giving means giving communities access to tools, training, data, compute, mentorship, governance frameworks, cultural platforms and routes into employment. It means building the conditions in which people can solve their own problems, tell their own stories and shape their own futures.

This requires partnership. Philanthropy cannot operate in a vacuum. The strongest historical examples often involved cooperation between benefactors, civic authorities, educators, architects, librarians, artists, doctors and reformers. The same will be true now. AI philanthropy should connect model companies with universities, schools, local authorities, cultural institutions, unions, charities, enterprise bodies and young people themselves. It should respect place. The needs of a post-industrial town, a rural school, a creative cluster, a maritime city, a developing economy and a global health network are not the same.

For the C-suite, this is not merely a moral argument. It is a strategic argument. Organisations that invest in the next workforce, responsible AI and social legitimacy will be more resilient. They will understand risk earlier. They will attract better talent. They will be trusted partners to government and society. They will avoid the fragile arrogance that sometimes accompanies technological dominance. Above all, they will understand that in a complex economy, trust is an asset.

Conclusion: hope in the future

In my opinion there is a great deal of hope in the future. History does not tell us that every revolution becomes humane by accident. It tells us the opposite. It tells us that progress becomes humane when capital, conscience, governance and imagination are deliberately joined together. The Industrial Revolution created wealth and disruption; philanthropy at its best converted some of that wealth into libraries, galleries, universities, parks, villages, health research and opportunity. The Agentic AI revolution now asks for its own version of that settlement.

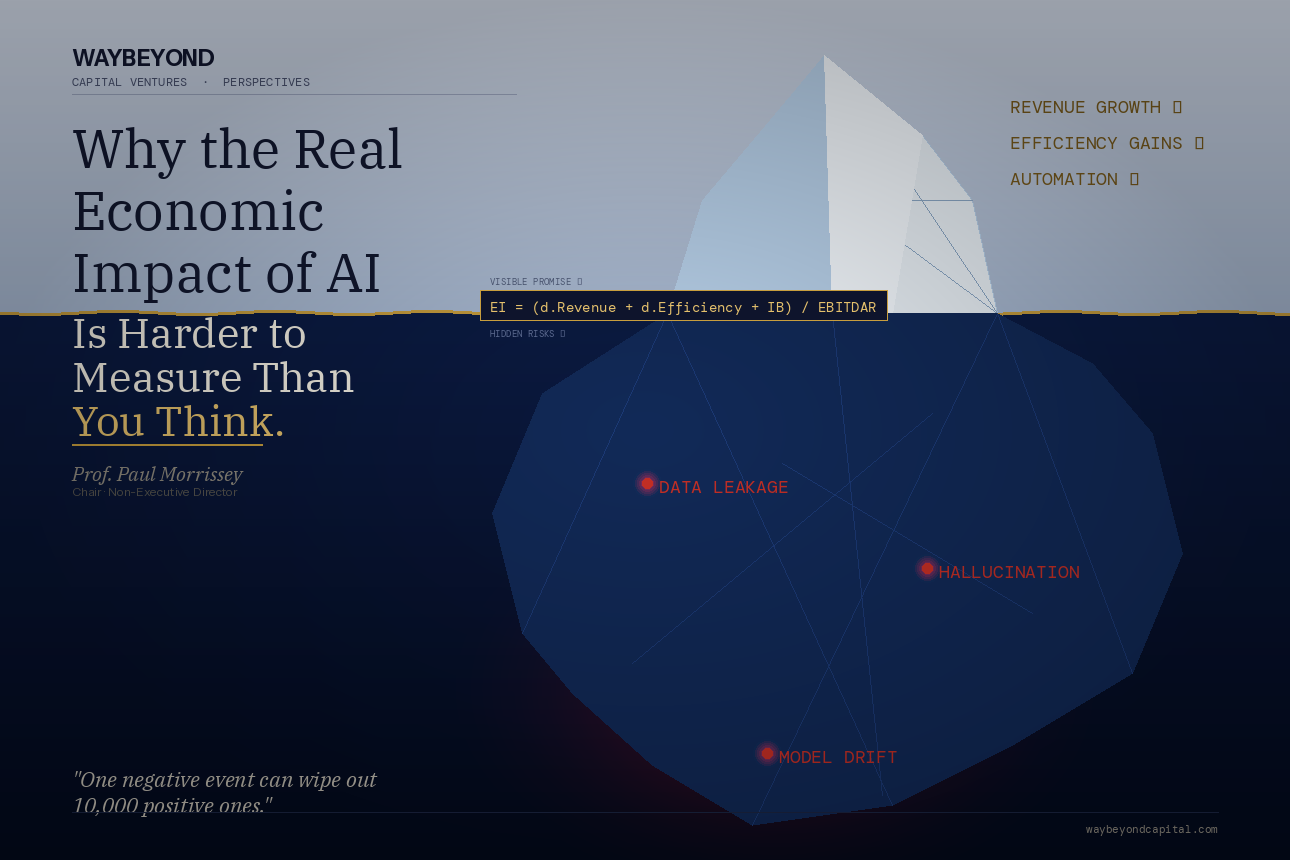

The giants of AI can become bastions of humanity, but only if they understand the path to success within the complex equation. The equation includes productivity, yes, but also dignity. It includes innovation, but also culture. It includes automation, but also human agency. It includes shareholder value, but also social value. It includes safety, transparency, education, creativity and the young workforce that will inherit the systems we are now building.

The challenge is not to be nostalgic about Carnegie, Cadbury, Tate, Leverhulme or Wellcome. The challenge is to learn from the architecture they left behind: durable institutions, public access, civic ambition and the belief that wealth created by a revolution carries obligations beyond the balance sheet. If the AI age can absorb that lesson, then digital philanthropy can become one of the great civilising forces of the twenty-first century. That is the hopeful path: to ensure that the most powerful technology of our time is not merely intelligent, but wise enough to serve humanity.

Selected references and source notes

1. Andrew Carnegie, The Gospel of Wealth, originally published 1889; Carnegie Corporation of New York historical text.

2. U.S. National Park Service, Carnegie Libraries: The Future Made Bright, noting 1,679 Carnegie-funded public library buildings in the United States between 1886 and 1919.

3. Tate & Lyle, Henry Tate biography, noting his gift of contemporary paintings to the nation forming the nucleus of Tate Gallery.

4. Bournville Village Trust, Bournville’s Story and Foundation of Bournville Village Trust, noting the Cadbury family’s model village and trust legacy.

5. Leverhulme Trust, History of the Trust, noting William Hesketh Lever’s industrial fortune and the Trust’s education and research mission.

6. Wellcome, History of Wellcome, noting the Wellcome Trust’s foundation after Henry Wellcome’s death in 1936 and its mission to improve health through research.

7. Jane Humphries, Childhood and Child Labour in the British Industrial Revolution, Economic History Review, 2012.

8. Reuters, OpenAI Foundation commits $250 million to help workers, economies navigate AI disruption, 27 May 2026.

9. Reuters, Anthropic and Gates Foundation launch $200 million partnership for AI in health and education, 14 May 2026.

10. Reuters, Google commits $1 billion for AI training at U.S. universities, 6 August 2025.

11. Microsoft AI and digital skills commitments as reported in 2025 public coverage of its AI education and nonprofit programmes.

Note: This essay uses historical examples as interpretive parallels rather than direct equivalences. It recognises both the achievements and limitations of industrial philanthropy and applies those lessons to the emerging digital philanthropy age.