Why the Real Economic Impact of AI Is Harder to Measure Than You Think.

Over the past year I have had many conversations with executives, board members, and investors about Agentic AI and the profound changes it promises to bring to organisations. The tone of these discussions is usually enthusiastic, and understandably so.

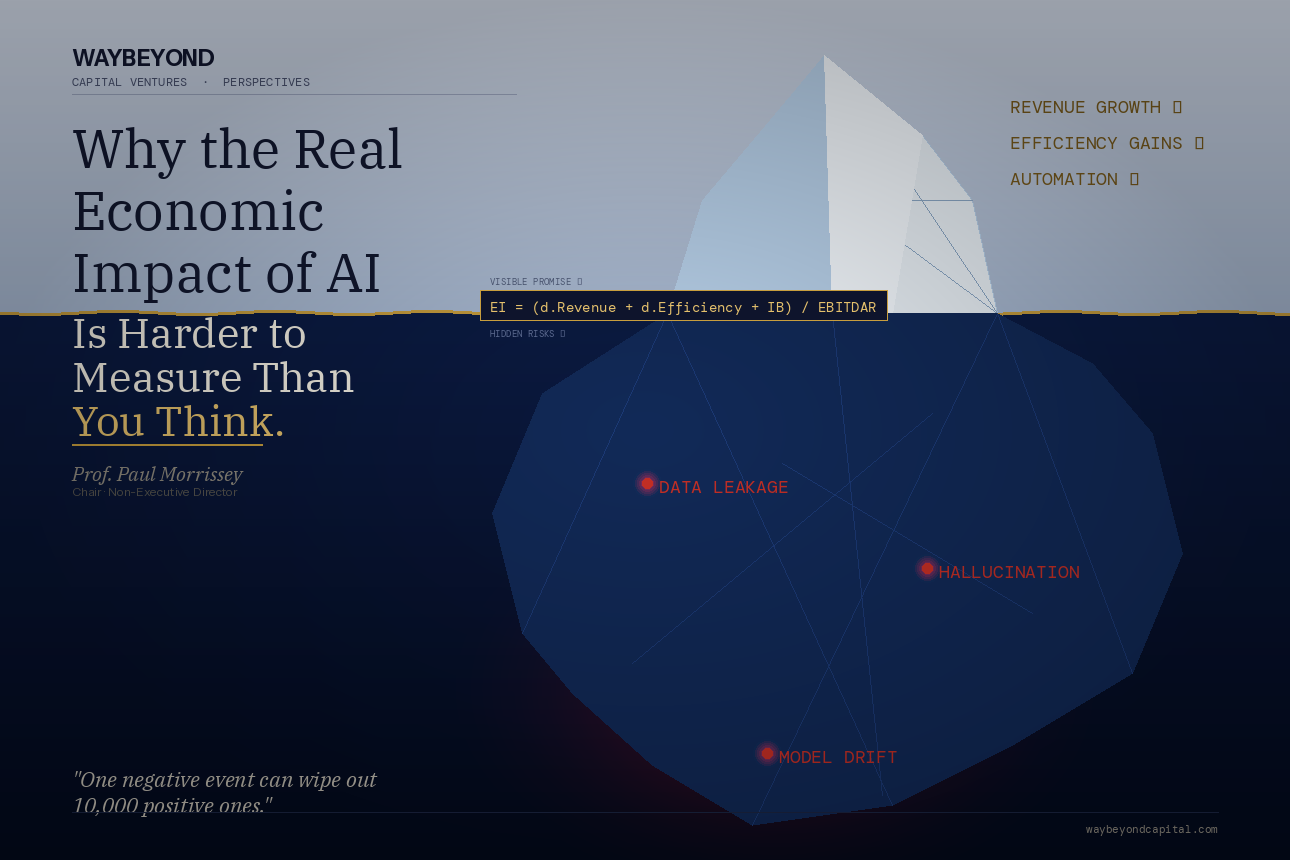

We are told that AI agents will unlock new revenue streams, dramatically increase productivity, and automate complex workflows across the enterprise. Marketing teams expect faster campaign creation, customer service leaders expect 24-hour support automation, finance departments expect automated reconciliation, and operations teams expect continuous optimisation. In short, everyone is focused on the upside.

But there is a question I often ask in boardrooms and strategy sessions that tends to bring the conversation to a pause:

How do you actually measure the real economic value of AI?

Because while everyone is excited about the promise of increased revenue and operational efficiency, far fewer organisations are measuring the full economic impact of AI — including the hidden risks that come with deploying autonomous or semi-autonomous AI agents. And those risks can be significant.

The Problem with Simplistic ROI Thinking

Most AI business cases presented to CFOs follow a predictable format.

They focus on two numbers:

- Revenue growth

- Operational efficiency

This is a reasonable starting point. AI can absolutely help organisations generate new revenue opportunities and reduce operational costs. But it is only part of the picture. What is often missing from these models is a third and much more complex factor: Intangible Benefits. (IB)

These can be positive — such as improved customer experience, faster innovation, or stronger competitive positioning.

But they can also be negative, — And when negative intangibles occur in the context of AI systems, they can escalate quickly. Before discussing those risks, it helps to introduce a simple framework I often use when discussing AI economics with executive teams.

A Practical Metric for Measuring AI Value

One way to frame the discussion with finance leaders — particularly the CFO, who is usually the most sceptical person in the room — is to express the impact of AI in terms of Economic Impact (EI) relative to the organisation’s financial scale.

The metric I use is the following:

Economic Impact (EI) = (∆ Revenue + ∆ Efficiency + Intangible Benefits) / EBITDAR

Where:

- Δ Revenue represents the incremental revenue generated by AI initiatives (Use Cases)

- Δ Efficiency represents measurable improvements in productivity or cost reduction

- Intangible Benefits (IB) capture both positive and negative strategic effects

- EBITDAR represents Earnings Before Interest, Taxes, Depreciation, Amortisation and Restructuring (or Rent), which effectively normalises the organisation’s operating scale

Why divide by EBITDAR?

Because doing so contextualises the Economic Impact (EI) relative to the size of the organisation. A £5 million efficiency gain means something very different to a company with £20 million EBITDAR than it does to one with £500 million.

This framework gives the CFO a common financial language in which to evaluate AI initiatives. But the most important component of the equation is the one that is most frequently ignored. Intangible Benefits. (IB)

The Hidden Side of Intangible Benefits (IB)

When organisations present AI initiatives internally, intangible benefits are usually framed in positive terms:

- improved decision-making

- faster response times

- enhanced customer experiences

- stronger brand perception

All of these are real.

However, what is often underestimated are the negative intangible impacts that can emerge from poorly supervised AI systems. Particularly when organisations begin deploying autonomous AI agents.

AI agents are powerful because they can act independently — analysing information, making decisions, and executing tasks across multiple systems. But autonomy without governance creates new categories of risk.

Three deserve careful attention.

1. Data Leakage

AI systems depend heavily on data.

When those systems are connected to internal knowledge bases, customer records, contracts, or intellectual property, the risk of data leakage becomes significant.

This can occur in multiple ways:

- sensitive data being exposed through prompts or responses

- proprietary information being incorporated into external models

- confidential customer data being accessed or transmitted improperly

The consequences can range from regulatory breaches to loss of competitive advantage. In highly regulated sectors — such as telecommunications, healthcare, or finance — the reputational damage alone can be considerable.

2. Hallucination and Customer Trust

Large language models and AI agents can sometimes generate hallucinations — confident but incorrect responses.

In internal workflows this may simply create inefficiencies.

In customer-facing systems, however, the consequences can be more serious.

Imagine an AI agent:

- giving incorrect billing information

- misrepresenting product capabilities

- generating misleading compliance guidance

The immediate impact is poor customer experience. But the deeper issue is trust erosion.

Trust, once lost, is extremely difficult to rebuild.

3. Model Drift

AI systems are not static.

Over time, models can experience drift — where their behaviour gradually deviates from expected performance.

This may occur because:

- the underlying data environment changes

- feedback loops alter model behaviour

- system updates introduce unintended bias or errors

If drift is not detected early, the organisation may continue operating under the assumption that AI outputs remain accurate. In reality, decision quality may already be deteriorating.

Reputation: The Fragile Asset

When organisations discuss AI benefits, they often overlook the fact that reputation is one of the most valuable assets any company possesses.

And reputation behaves asymmetrically. One bad event can wipe out thousands of positive interactions. I often summarise it in very simple terms:

One negative event can wipe out 10,000 positive ones.

In the context of AI, this could be:

- a widely reported data breach

- an AI-generated decision perceived as unethical

- a discriminatory algorithmic outcome

- a regulatory violation resulting from automated decision-making

These events do not just affect operations. They affect brand trust, customer loyalty, regulatory scrutiny, and investor confidence. All of which belong squarely within the Intangible Benefits (IB) component of the economic impact equation.

Why Governance Matters

None of this should be interpreted as an argument against AI. Far from it.

AI will undoubtedly become one of the most powerful productivity tools organisations have ever deployed. But the organisations that succeed will not simply deploy AI faster than others. They will deploy it more responsibly and more intelligently.

That means introducing:

- strong AI governance frameworks

- human oversight for critical decisions

- continuous model monitoring

- robust data protection mechanisms

- clear ethical guidelines for AI deployment

In other words, AI should augment human judgement — not replace it entirely.

The Conversation CFOs Need to Have

Whenever I present the Economic Impact (EI) equation to executive teams, I emphasise one point. The equation is not just a financial model. It is a governance conversation.

It forces leadership teams to ask:

- What new revenue can AI truly create?

- What measurable efficiencies will it deliver?

- What positive intangible benefits will it generate?

- And critically, what negative intangible risks might it introduce?

Only by considering all four elements together can organisations measure the true economic value of AI. Because if the numerator in the equation includes hidden risks that no one is monitoring, the apparent economic impact may be overstated.

And when those risks materialise, the consequences can be sudden and severe.

Final Thoughts

AI agents will undoubtedly transform how organisations operate. They will create extraordinary opportunities for automation, innovation, and growth. But as with all powerful technologies, the benefits must be balanced with careful governance and realistic economic measurement. The organisations that thrive in the AI era will not be those that chase automation blindly. They will be those that understand both the upside and the downside and measure the true economic impact accordingly.

Why This Thinking Matters in AI Readiness.

This type of thinking is precisely why I developed my AI Readiness Assessment methodology. Too many organisations approach AI adoption as a technology deployment exercise rather than a strategic capability transformation.

The purpose of the AI Readiness Assessment is to help organisations understand:

- where they currently stand with AI maturity

- how strong their governance and risk frameworks are

- whether their data foundations are ready for AI deployment

- how AI initiatives can be measured in terms of real economic impact

More importantly, it allows organisations to design an AI journey that is measurable, risk-aware, and sustainable. In other words, it helps organisations capture the upside of AI while ensuring the hidden risks — the ones that often sit inside the “Intangible Benefits” component of the equation — are properly understood and managed.

Because the real challenge of AI is not deploying it.

The real challenge is deploying it responsibly, strategically, and in a way that strengthens the organisation rather than exposing it to unnecessary risk.